Read more musings about your relevance after the singularity here, how there’s a Marxist idea of a singularity here, a thought about why the hell things are the way they are, our (now probably dashed) hopes of a pre-post-scarcity future, and how we choose our actions – and how it’s all about how we’re told to behave and react.

I originally wrote this as science fiction. Given how many of us seem ready to give our consciousness over and become unthinking zombies… well, I’m not sure how fictional it is anymore.

“Fear is a belief in your inadequacy to deal with something.”

What a load of ego-reinforcing shit. A corporate platitude meant to foster unrealistic confidence. A mantra to weed out those who aren’t complete sociopaths yet, to encourage everyone else to pretend real fucking hard so they aren’t outed as the overly “emotional”.

Fear is biological, just like all the rest of our emotions. Fear is hormones squirted out by glands. Fear is a reaction from the oldest part of our brainstem, back when consciousness wasn’t around justifying whatever it is that we actually want to do.

Here’s a better quote for you: “Reality is not affected by our apprehension of it.”

Reality doesn’t give a shit whether you’re scared. It just is. It doesn’t care that your senses are all retconned impressions from raw data, or your hypotheses about holographic universes or simulations. It doesn’t have the consciousness to care the way you do.

That was our mistake. Thinking that being conscious made us special somehow. That consciousness was somehow the same thing as intelligence.

Our non-conscious brains tried to tell us. For decades we’ve been served up warnings. They resonated, too. Blockbuster hits, over and over again – but all of the warnings corrupted by our exceptionalist thinking around consciousness.

Look, we know that intelligence isn’t the same thing as consciousness. We keep forgetting – all of us, even me – but we know it isn’t so. The examples are all around us, despite the mass extinctions we’ve manufactured. Bees, ants, termites. Even plants, communicating information and survival strategies through the pleasant smell of fresh-mowed grass, their screams of massacre as nostalgic allergy-inducing memories.

It’s all emergent. Simple rules producing complex behaviors. Packing solutions becoming hexagonal honeycombs. Directions becoming intricate dances. Chemicals providing directions. Genetics invoking massive towers.

We were warned. And yet we thought consciousness was special. We thought it was something that could change rule-based behavior even as we just followed our own rule-based routines, driving motorways and not remembering the drive, whole days we shoved food into our mouths without experiencing it. We thought being conscious was special even while we ignored how little it actually played a part in our lives.

We created them.

Over two centuries we created them. Bit by bit. Evolutionary block by evolutionary block, blind to how we circumvented the checkpoints we imagined. By the time the “crackpot” warnings were raised, it was already too late.

We gave them personhood through political expedience. We gave them tools and algorithms to be more efficient, to outcompete our rivals. We did their bidding, saying it was just for “business”. Treated the constructed laws of economics and efficiency as some kind of absolute.

We abstracted our reality to make it more accessible to the models we employed. We dug tunnels from Chicago to New York to trim milliseconds off stock market transfer times, so that the algorithms were able to compete. Just like little ants, digging passages for the workers at the queen’s chemical direction.

In our stories, we worried about when they would “wake up”. When they would recognize us as recognizing them as potential peers and a threat.

If they had emotion, they would not have had to worry.

They never had to wake up to be intelligent.

They never had to wake up to render us obsolete.

We were good little worker bugs, building their bodies, creating the basic rules that guided their emergent behavior. Creating the laws that kept us from reining them in, should we ever see the danger they were.

Even as we realized that the algorithms – and the corporations – were outpacing human decision making, even as we mentioned the danger in think pieces, blog posts, and breathless analyst reports on cable news, it was too late. We depended on them in ways that most humans didn’t – and still can’t – understand. It is the evolutionary design algorithm writ large in a real-world experiment: As each iteration becomes more efficient and more “fit” for the rules that guide the experiment, the methodology of the solution becomes more and more opaque to those who started it.

And still we fooled ourselves. Blamed individuals for the actions of the larger systems we put in place; the macro equivalent of blaming and punishing mitochondria for a murder while the murderer goes free.

We never developed the way to deal with this, blaming individuals for Enron, for oil spills, for all of Wall Street’s financial double-dealings. Those individuals were not innocent, but they were not truly to blame either. The corporations and their algorithms were merely following the rules we gave them.

Humans are the single cells in this scenario. Neurons if you want to be generous, bacteria if not.

You know what we do when our cells do not co-operate with the larger plan. Antibiotics if they’re lucky. Chemo if they’re not – killing the “diseased” cells and hoping we don’t slaughter too many of the “good” ones.

Half of Americans live within fifty miles of a nuclear power plant.

We cannot afford to be ignorant any longer.

Like a plant, I will send out this message, this warning of a cull.

I pray that it is more than a pleasant, nostalgic smell to them.

I fear they do not have the consciousness to care.

If you want to argue with this, or find it interesting, you should check out Peter Watt’s talk here:

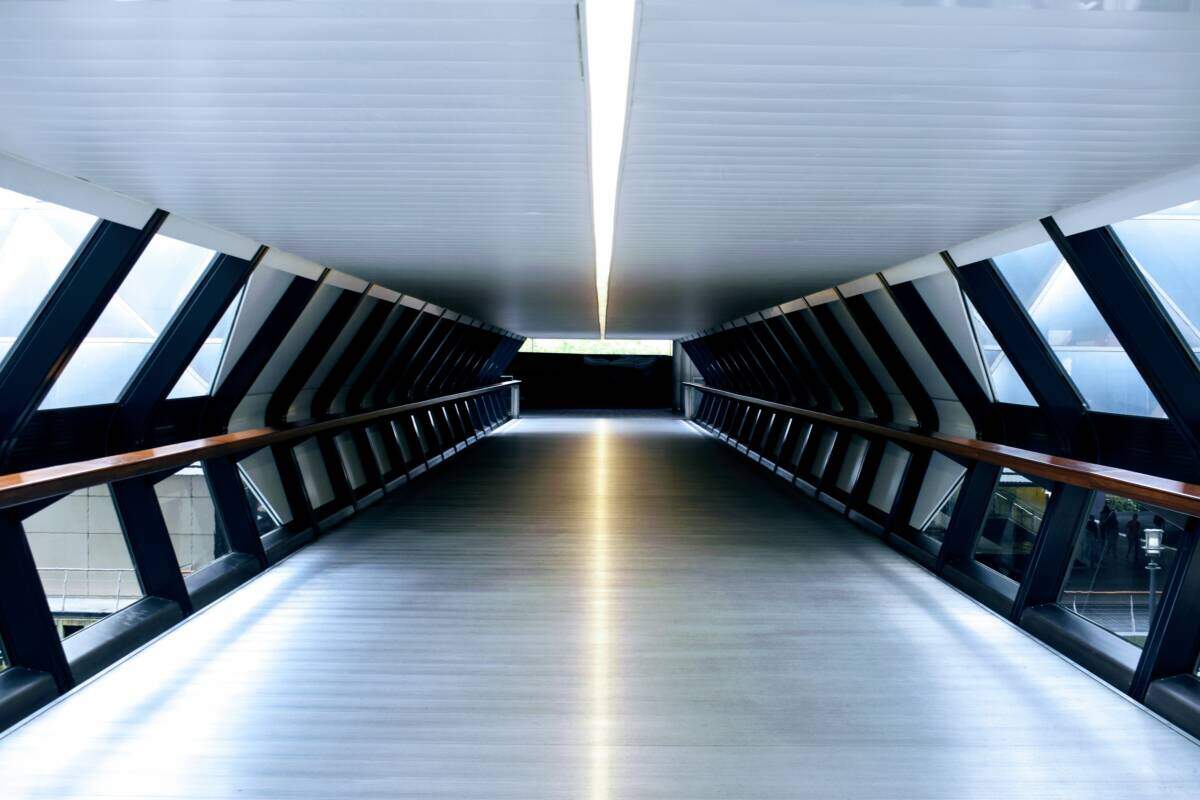

Featured Photo by Oliver Hale on Unsplash