I’ve been using generative AI to some extent for about a year. I’ve used it both for artwork generation and for text generation. {1}

I’m an end user here, so I don’t have any special insights about the back-end of the technology.

Regardless, I’ve noticed a couple of things:

-

It’s impressive at first blush. I mean, you press a button and something that would take you a long time to do (if you could do it at all) is completed in seconds. It takes less time, for example, to create an NPC portrait using a tool like hotpot.ai than it would to use image search to find an appropriate picture. {2}

-

It’s simplistic. I was unsurprised by recent research showing that it made discharge notes from a doctor "easier to understand" — because it lowered the reading level from an 11th grade level to a 6th grade level.

-

It must be double-checked, carefully. The same research found that the AI-generated discharge summaries "were rated entirely complete in 56 of 100 reviews, but 18 reviews noted safety concerns involving omissions and inaccuracies." The errors I’ve seen are sometimes very obvious — such as an extra head, holding a sword by the blade — and sometimes they’re subtle but significant, such as mixing up dates in a text passage, emphasizing a minor detail instead of a major one, or referring to software packages that don’t exist. {3}

-

It’s repetitive. So repetitive. Both in art and in even the most banal of text. The same phrases, the same linking words, the same sentence structure. Over and over and over again. For example, I used an AI model to summarize four of my recent blog posts. They’re actually pretty good summaries of the posts, but reading them in a row definitely makes the repetitive template noticeable. {4}

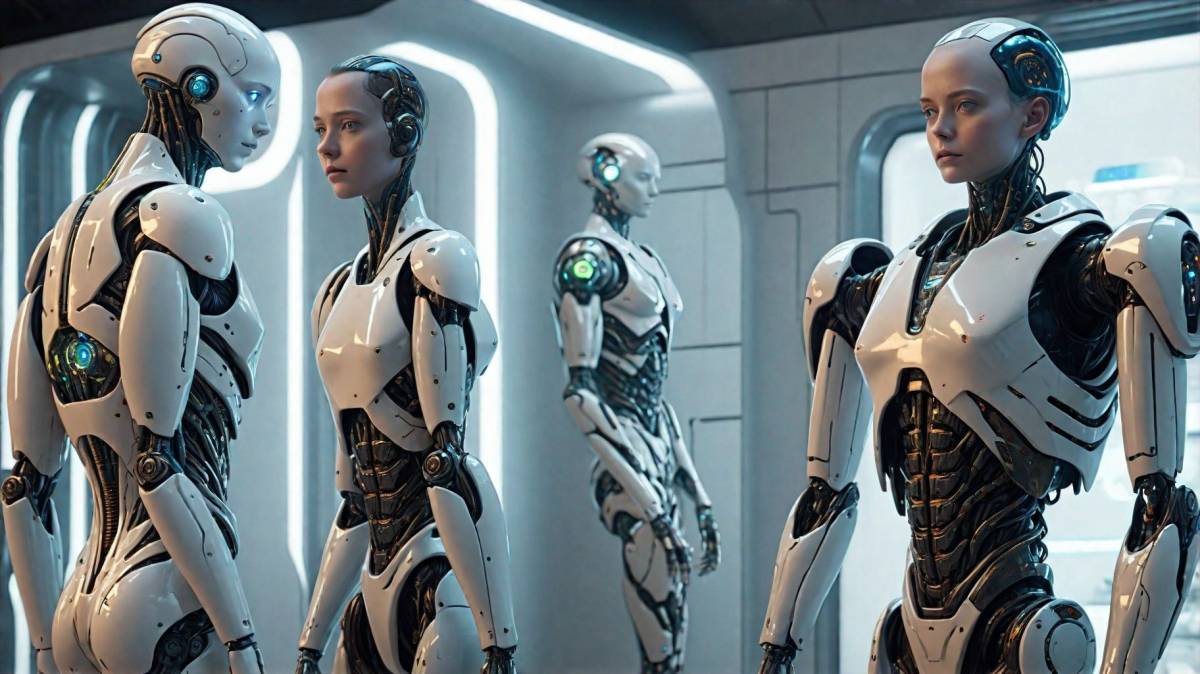

These images (generated by me) demonstrate all these together. At first glance, they look really cool. They’re pretty straightforward. But there’s errors (sometimes significant ones) in each image, and there’s definitely a lot of repetition going on, even though these did not have the same prompt, and not even the same image style. (Click the images to embiggen.)

All of these together mean that, at present, AI/ML solutions in art and text are the off-brand discount store versions of the real thing.

See, if you buy stuff at these discount stores, they’re cheaper than at the regular version… in all senses of the word. For example, "not only are there differences in the amount of energy in name-brand versus value-brand batteries, a Southeastern Louisiana University researcher also found that, more specifically, dollar store batteries are sold with less stored energy than name-brand batteries." {5}

At present, the text, code, and art produced by AI may seem to fill a need in the same fashion that those knock-offs do. But like the cheap knock-offs, it’s of questionable quality and doesn’t hold up on its own.

This does not reduce the societal and economic risks of AI/ML. Certainly, advances in the technology may minimize the effects that I’m currently seeing. And that the concept of "shrinkflation" exists at a time of record corporate profits makes it very clear that when a company’s values are centered on profit alone is a bad sign that the management class may not care that the work produced is substandard, as long as it makes a profit now.

But those risks are not strictly due to the algorithms.

Those risks are due to greedy people.

EDITED TO ADD: Well, that didn’t take long. Northwell Health isn’t going to bother paying musicians any longer: https://www.beckershospitalreview.com/digital-marketing/ai-generated-music-just-1-part-of-northwells-marketing-strategy.html

{1} Largely either to assist me in my own work, or for things like an NPC portrait for a game I’m running. For paid work, I pay artists and authors.

{2} Because face it, I’m not commissioning a portrait for the innkeeper the players have suddenly taken a personal interest in.

{3} Which could be exploited as a vector for all sorts of bad malware.

{4} The summaries are at https://gist.github.com/uriel1998/3a5a85af5b356d0b39b74be582da27d1

{5} John Oliver does an excellent deep dive on dollar stores: https://www.youtube.com/watch?v=p4QGOHahiVM