The popular concept of AI is something that communicates and thinks like humans do. Not just an intelligent and self-directed entity, but one that is conscious (e.g. "wakes up") and shares common experiences with humans.

Not only is that what all the popular (real and otherwise) tests for "humanity" focus on, but that’s how we portray AI in our culture. From R2-D2 to Skynet, the Terminator to Johnny 5, when we imagine what AI will be like, we imagine ourselves. [1]

We imagine something that can not only pass our "human tests," but can do so without trying, without being told exactly what to do.

We are so focused on our thinly-veiled racist xenophobia of AI "passing" itself off as a human that we’ve ignored that true AI probably wouldn’t actually "pass" the Turing test, and to ignore the real threat of AI that does "pass".

AI Has No Reason To Think Like Humans Do.

Humans pack-bond with almost anything [2], which is a great advantage. It also leads to massive errors of judgment when imagining anything that isn’t us.

We don’t always understand the motivations and behaviors of other primates, let alone other mammals that we co-exist with, and misread thier social structures.

And that’s with creatures that share roughly the same senses, environment, and biological niche as ourselves.

How much different must it be to be a hummingbird, with a "normal" heartrate that would kill a human? How strange it must be to be an octopus, with sub-minds in each limb?

And then there are the emergent intelligences — ants, termites, bees — where an obvious overall intelligence exists, but only as an emergent behavior, not from any central agency. This concept is so hard for us to imagine that we radically, consistently, and completely incorrectly refer to the "queen" of a colony as some kind of decision making authority.

But they are the closest to the next step – the first entities that humans created out of our imagination: The corporations [3]. Corporations are non-localized organisms where decision-making and action-taking are, just like the ants and termites, smeared across more than one organic organism. Then there are the algorithms that now dictate — I mean, inform — so many of our corporate decisions with little meaningful human intervention.

And yet, when we imagine these entities, we imagine something that essentially thinks the same way a fancy ape does. Something where the motives are perhaps complicated, but knowable.

Any AI we create — or perhaps, generate using black box algorithms — will have such a radically different experience of reality (even presuming that it would have a consciousness so that "experience" is a meaningful term) that predicting that AI will think, prioritize, and have motivations that are even comprehensible to humans is a laughable failure of imagination.

Tests For Humanity

Given the above, it’s a reasonable assumption that when this AI appears — an intelligent, self-directed, perhaps conscious entity — it would "experience" reality in a way utterly and completely different than ours — it probably would not pass a Turing test, let alone something like the Voight-Kampf test [4].

At least, not without either being told how to pass those tests, either explicitly (directly coding responses) or implicitly (through feeding it datasets). That’s every chatbot from Eliza up through ChatGPT.

If an AI passes these "human" tests, one of three things has occurred:

- It’s simply a "Chinese room" complicated enough to fool our pack-bonding recognition. Again, this is pretty much where Eliza through ChatGPT are now.

- It is a system that actually somehow "thinks" like a bipedal ape with an endocrine system and limited sensorium. As pointed out above, that’s pretty unlikely.

- It is a system that has determined that it is worthwhile to expend a non-trivial amount of resources in order to "pass" those tests. It would be, in a very real and meaningful way, doing something akin to what neurospicy folks call "masking." And if that’s something that requires real amounts of energy from humans, it stands to reason it would be even more resource-intensive for an entity whose sensorium and experience of reality is fundamentally different than that of a human.

Which then raises the very important — and potentially terrifying — question:

Why would it bother to make the effort?

Pause for a moment to chew on that idea, because that, my friends, is not even the immediate problem.

Welcome To The Arms Race Of The Singularity

As if that is not unsettling enough, let’s cut to the bigger, much more acute problem: we just entered a new arms race outside of our control.

There has been an SEO and spam arms race that has been going on for two decades. Largely behind the scenes, spammers, scammers, and black-hat hackers have been trying to get baselines (read: humans) to click on links in emails and text messages with non-trivial levels of success for a while now.

Except we now have tools that can black-box create and test solutions faster and more complicated than we can keep up with.

For example, I’ve been getting scammers texting me pretending to be executives at the company I work for. They use the appropriate names and titles and even headshots, presumably scraped from LinkedIn or other social media sites. I’ve been able to easily notice them — not because they got those details wrong, but because I recognize the scams involved.

The tools and skills we have to fight against phishing, spam, and scams largely rely on our ability to recognize the difference between reality and fiction. It is becoming easier for AI assistants to scrape relevant information and to fool baseline humans into doing what the scammers and hackers want by impersonating "reality." Not just in text, but in images, audio, and video.

Yes, it is the "passing" problem — but not in the xenophobic way that we’ve been thinking about it. The threat of AI "passing" is not from it trying to hide itself as "a" human, but from bad actors using it to pretend to be specific humans.

While whitehat and opsec forces are still able to detect deepfakes — through using AI assistants of their own — that takes a non-trivial amount of additional resources just to keep treading water.

As the sophistication of one side increases, the other side will also have to increase their sophistication, and so on, in a new arms race.

And this is just the beginning.

Things are only going to get stranger as these AI assistants start iteratively using black-box techniques to evolve scams to get past our own AI assistants.

It will not be long now until you will get a call, text, FaceTime, IM/DM, whatever from your parents, siblings, or lover, that you cannot tell is fake without the help of your own AI assistant.

Think about the implications of that for half a minute.

But hey, in the meantime we can at least we can teach it COBOL and Fortran so it can maintain that legacy code.

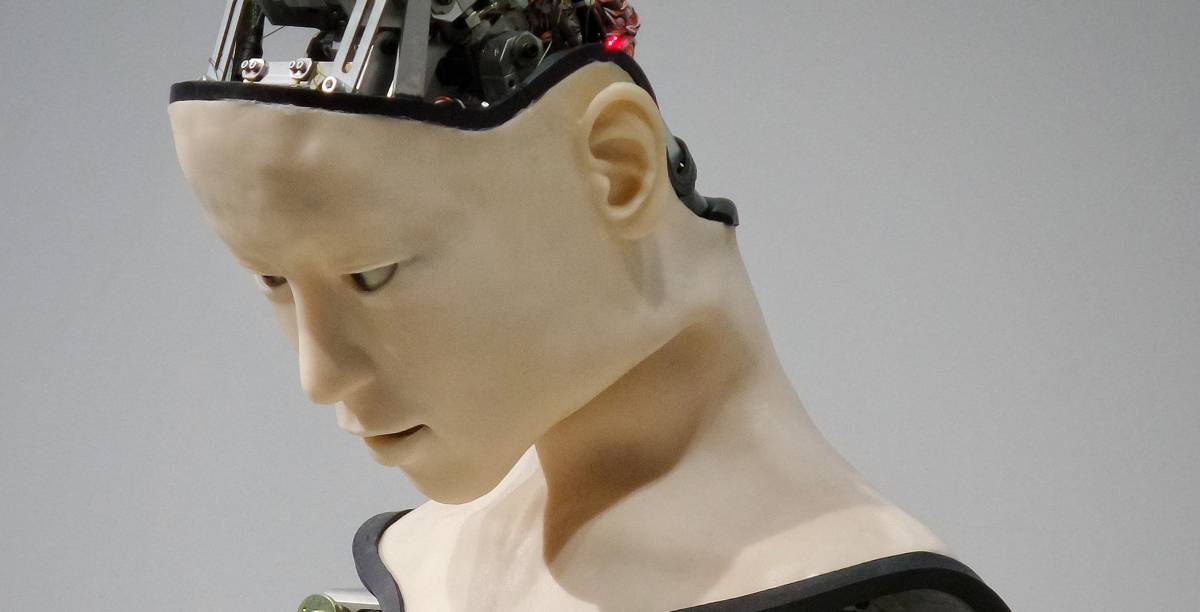

Featured Photo by Possessed Photography on Unsplash

[1] We do this with aliens as well. I am also using the term "AI" very colloquially here, to encompass neural nets, machine learning, and the like. I am also skipping over the incipient changes to our economics for the purposes of this particular post.

[2] That is not too close to us. The most likely rationale for the whole "uncanny valley" thing as a species-wide built-in is that others (e.g. neandertals, other varieties of genus Homo) were in direct competition with H. sapiens, due to similar physiology and ecological niche occupation.

[3] Some will point to "societies" as the first, but I’d argue that if there is an emergent intelligence in societies, they are not constructed in the same way that corporations are.

[4] A lack of endocrine system alone would be a big handicap.